Building a Low-Latency CPU-Based Voice Assistant with Streaming TTS

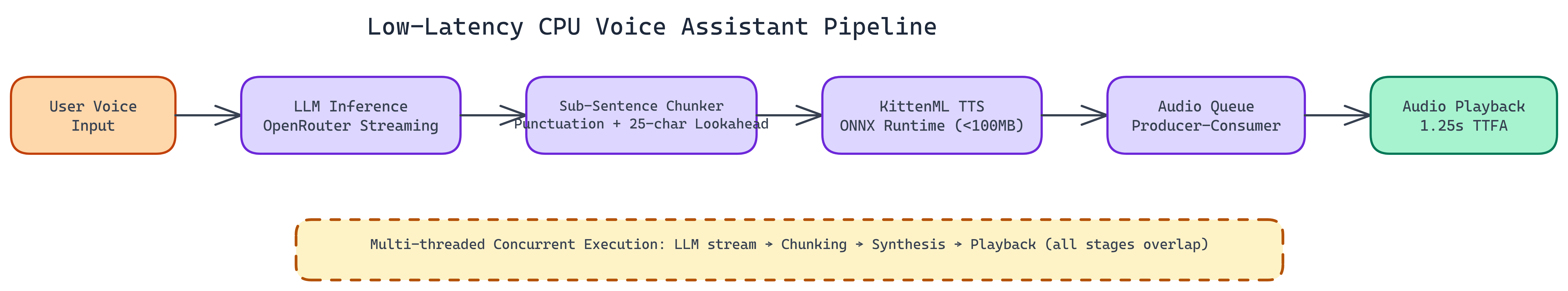

NEO built a sub-1.3-second time-to-first-audio (TTFA) voice assistant that runs entirely on CPU using KittenML’s TTS model, sub-sentence streaming at punctuation boundaries, and a multi-threaded producer-consumer pipeline.

Problem Statement

We asked NEO to: Build a voice assistant that feels responsive on CPU-only hardware—no GPU. Most TTS pipelines wait for full sentences before synthesizing, which stacks latency. The system should start playback as soon as possible (e.g. at comma/semicolon boundaries) with a small look-ahead to preserve prosody, and run LLM + TTS + playback in a concurrent pipeline.

Solution Overview

NEO built a CPU-optimized voice assistant achieving 1.25s TTFA without GPU:

- Sub-Sentence Streaming — Trigger synthesis at commas/semicolons plus 25-character look-ahead; start audio mid-sentence without choppy output

- Multi-Threaded Pipeline — LLM (OpenRouter) streams tokens → chunking at punctuation → TTS (KittenML, ONNX) per chunk → playback; stages run concurrently

- Small TTS Model — Under 100 MB (e.g. vs Piper/Sherpa-ONNX ~150 MB+); faster load and lower memory

- ONNX Tuning — Thread affinity and parallelism tuned for high core-count Windows; high CPU utilization, low cache misses

Workflow / Pipeline

| Step | Description |

|---|---|

| 1. LLM Inference | OpenRouter-hosted model streams tokens to the client |

| 2. Chunking | Watch token stream for punctuation triggers; package chunks for synthesis |

| 3. TTS Synthesis | KittenML model runs on each chunk via ONNX as soon as chunk is ready |

| 4. Audio Playback | Queue synthesized audio and play continuously; pipeline keeps all cores busy |

Technical Details

- Entry point:

voice_assistant_true_streaming.py - Requirements: Python 3.12+, OpenRouter API key in

.env - TTFA: ~1.25s with sub-sentence streaming; ~1.8–2.5s without

- Target: Edge, dev laptops, cost-sensitive and offline deployments where GPU is not available

Repository & Artifacts

Generated Artifacts:

- Streaming LLM → chunking → TTS → playback pipeline

- ONNX Runtime tuning for Windows CPU

- 25-character look-ahead and punctuation-based chunking

- Sub-100 MB TTS model integration

Best Practices & Lessons Learned

- Sub-sentence streaming is the main lever for TTFA on CPU.

- 25-character look-ahead was chosen empirically for prosody; smaller values can break naturalness.

- ONNX thread configuration and audio queue management need tuning under load to avoid pops/dropouts.

- Below ~1.5s TTFA the interaction feels conversational; above it feels like waiting.