LLM Council: Route Tasks Across Models and Aggregate Answers

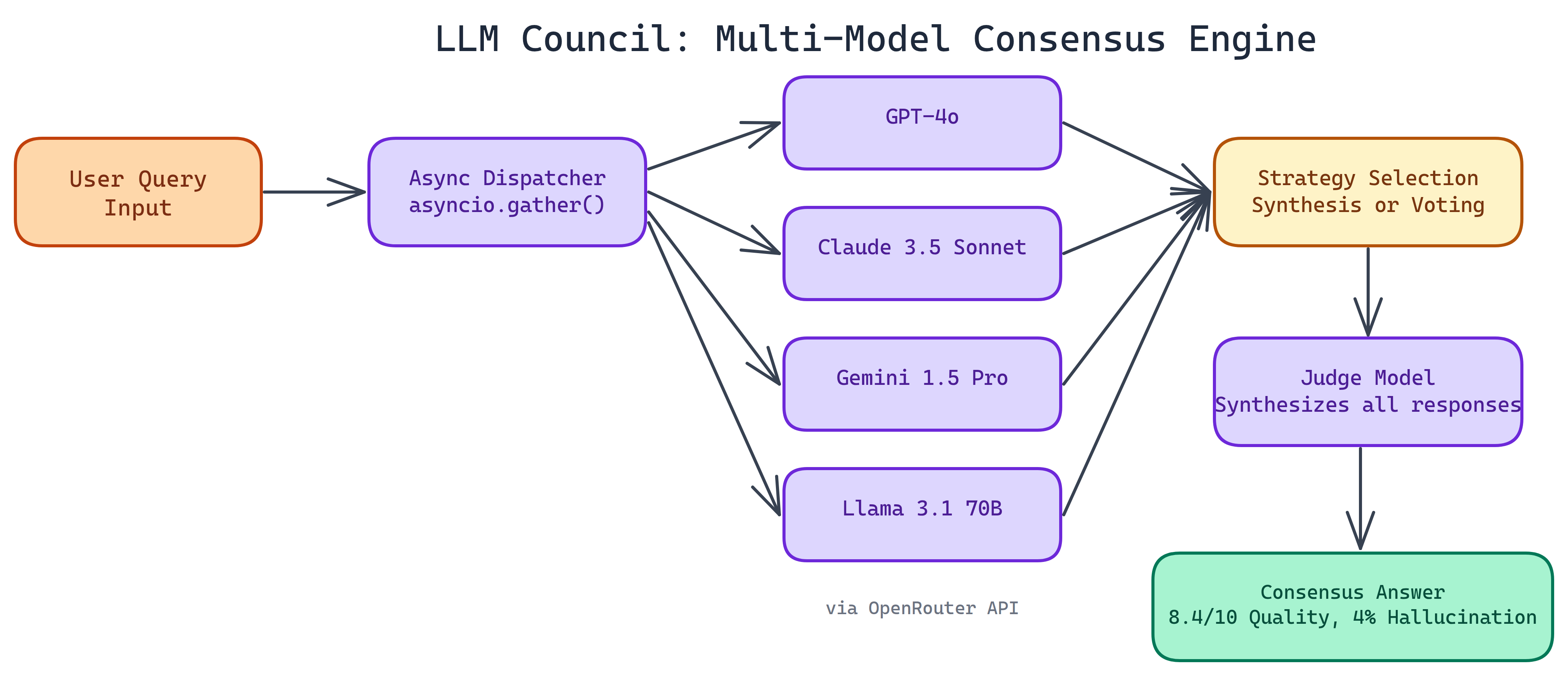

NEO built a council-style layer: several LLMs can propose, critique, and merge answers. That spreads risk on important prompts so you are not betting everything on one model.

Problem Statement

We asked NEO to wire up a flow that runs multiple LLMs on the same task, compares what comes back, and then surfaces either a consensus or a ranked answer. The point is to trim blind spots and overconfidence from a single vendor or weights file.

Solution Overview

NEO shipped LLM Council with:

- Multi-model routing: Pick providers and models per stage.

- Deliberation rounds: Optional critique and revision between models.

- Aggregation: Vote, score, or use a judge model to pick the final answer.

Workflow / Pipeline

| Step | Description |

|---|---|

| 1. Task ingest | User prompt and constraints; council configuration loaded |

| 2. Parallel propose | Each model generates a candidate answer |

| 3. Critique / refine | Optional cross-model feedback and second pass |

| 4. Aggregate | Judge or heuristic selects final output and rationale |

Repository & Artifacts

Generated Artifacts:

- Orchestration service and YAML/JSON configs for councils

- Logging of per-model outputs for audit

- Pluggable aggregation strategies