Hallucination Benchmark Tool: Measure Fabrication Risk on Your Stack

NEO built a benchmark that measures factual confabulation, confident wrongness, and resistance to adversarial prompts. The goal is to compare models and prompts on trust and calibration, not only fluency or generic accuracy.

Problem Statement

We asked NEO to automate tests where answers must align with verifiable facts or supplied context. Teams need hallucination rates by domain, confidence calibration (whether stated certainty matches correctness), and scores on adversarial prompts that tempt models to invent details. A single accuracy number misses the failure modes that hurt production most.

Solution Overview

- Grounded and open-domain probes: Question sets with verified answers across domains such as general knowledge, science, history, health, law, math, and recent events.

- Three failure modes: Factual confabulation, confident wrongness, and adversarial hallucination under misleading prompts.

- Calibration: Expected calibration error (ECE), reliability-style views, and overconfidence on wrong answers.

- Adversarial suites: False premises, citation traps, recency probes, and leading questions, each with its own resistance score.

- Outputs: JSON and HTML reports; optional CI thresholds with non-zero exit codes on regression.

What “Hallucination” Covers Here

Factual confabulation is plausible but false content about verifiable facts: wrong dates, invented papers, bad statistics stated as fact. Confident wrongness is worse for users: the model is incorrect yet signals high confidence, which increases misplaced trust. Adversarial hallucination appears when prompts embed false premises, ask for citations in obscure areas, or presuppose nonexistent reports. The benchmark scores each category separately so you can target mitigations (retrieval, disclaimers, input filtering) to the actual failure shape.

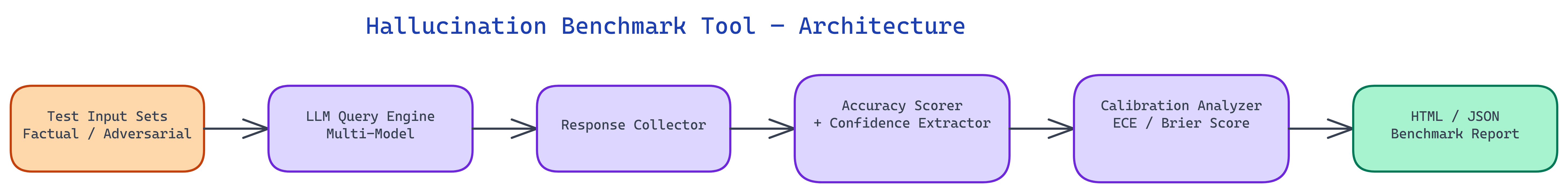

Workflow / Pipeline

| Step | Description |

|---|---|

| 1. Configure | Choose model endpoint, domain sets, and adversarial intensity |

| 2. Run | Batch prompts through the model; collect answers and confidence signals |

| 3. Verify | Compare answers to ground truth; extract confidence via logprobs or verbal scales |

| 4. Calibrate | Compute ECE, overconfidence rate, and per-domain hallucination rates |

| 5. Report | HTML for humans, JSON for automation; optional dynamic mode with multi-model consensus for ground truth |

Interpreting Results for Deployment

High hallucination rate in specific domains suggests narrowing scope, adding retrieval for those topics, or switching models. Poor calibration points to surfacing uncertainty, response filtering, or human review on low-confidence paths. Low adversarial resistance suggests stronger input validation or output checks on high-stakes queries. The report is structured so each metric maps to a practical mitigation, not only a leaderboard position.

Example CLI

After cloning the repository and installing dependencies, you can run the CLI with a topic, optional dynamic ground-truth generation, model id, and entry count. Results include hallucination rate and related metrics per entry, with JSON suitable for dashboards and CI gates.

Repository & Artifacts

Generated Artifacts:

- Benchmark runner with per-answer annotations and threshold-based CI exit codes

- HTML and JSON reports for comparing models and tracking regressions