GitHub Repo Query Agent: Ask Questions Across Any Repository

NEO built an agent that accepts a public GitHub URL, clones the repository, and answers plain-language questions about structure, dependencies, and behavior. The goal is to replace hours of manual file-by-file reading with a conversational pass over the codebase.

Problem Statement

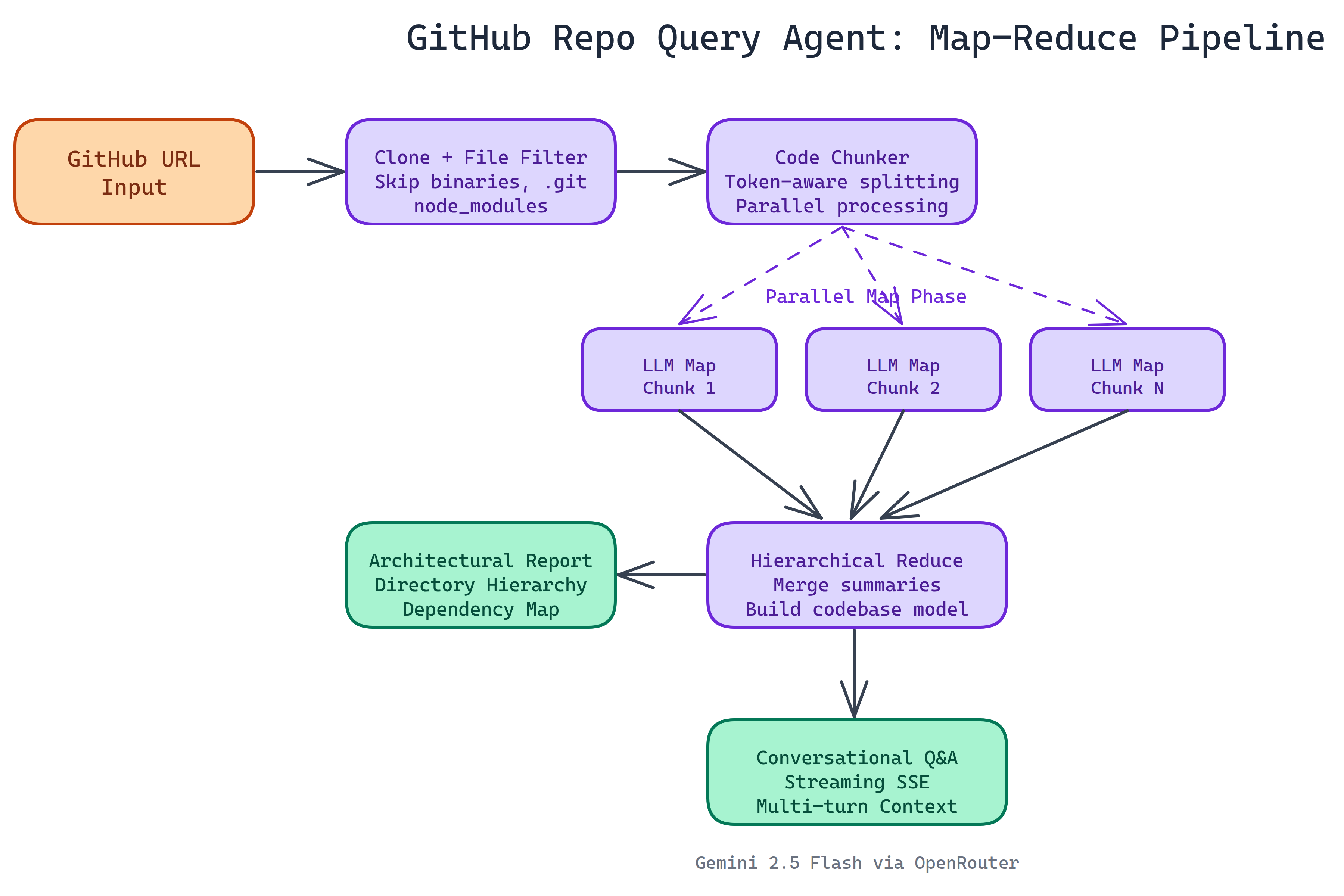

We asked NEO to let developers ask questions about unfamiliar repositories without opening every folder. Large codebases do not fit in a single LLM context window, so the system must chunk, summarize in parallel, and merge results into a coherent picture before chat turns are answered.

Solution Overview

- Ingest: Clone the repo; walk the tree and filter noise such as binaries,

.git, andnode_modules. - Map-reduce analysis: Split code into chunks; process chunks in parallel; hierarchically merge summaries into one architectural report (layout, components, dependencies, data flow).

- Chat: Multi-turn conversation with streaming responses, grounded in the merged understanding.

- Transport: Server-Sent Events so analysis and answers stream to the browser without polling.

Map-Reduce at a Glance

A monorepo with hundreds of thousands of lines cannot be pasted into one prompt. The pipeline maps over chunks (each summarized or structured independently), then reduces in stages until a single document captures cross-cutting concerns. That preserves relationships that a naive per-file summary would blur, and it keeps each stage within token limits so runs fail predictably rather than silently truncating.

Workflow / Pipeline

| Step | Description |

|---|---|

| 1. Input URL | User provides a public GitHub repository URL |

| 2. Clone and filter | Shallow clone where appropriate; skip binary and dependency noise paths |

| 3. Map-reduce | Chunk sources; parallel map; hierarchical reduce to an architecture report |

| 4. Query | Chat uses the report plus retrieval as needed; SSE streams tokens to the UI |

| 5. Follow-up | Multi-turn conversation with context management as history grows |

Stack and Practical Limits

The reference stack is Python and Flask on the server, with a terminal-styled web UI aimed at developers. The LLM defaults to a configurable OpenRouter model (for example Gemini Flash class models) via environment variables. Public repositories are the supported path out of the box; private repos would require token-based access as a future extension.

Typical local run: install dependencies, set API keys in .env, start the Flask app, open the local URL, paste a repo URL, wait for the architectural report, then ask questions about authentication, modules, or data flow.

Use Cases

Onboarding onto a new service or library. Code review context before reading a large diff. Due diligence on open source dependencies. Starter documentation from the generated architecture summary. In each case the value is faster orientation without replacing careful reading where risk is high.

Repository & Artifacts

Generated Artifacts:

- Flask app with SSE streaming and configurable model strings

- Map-reduce pipeline scaffolding for large repository analysis